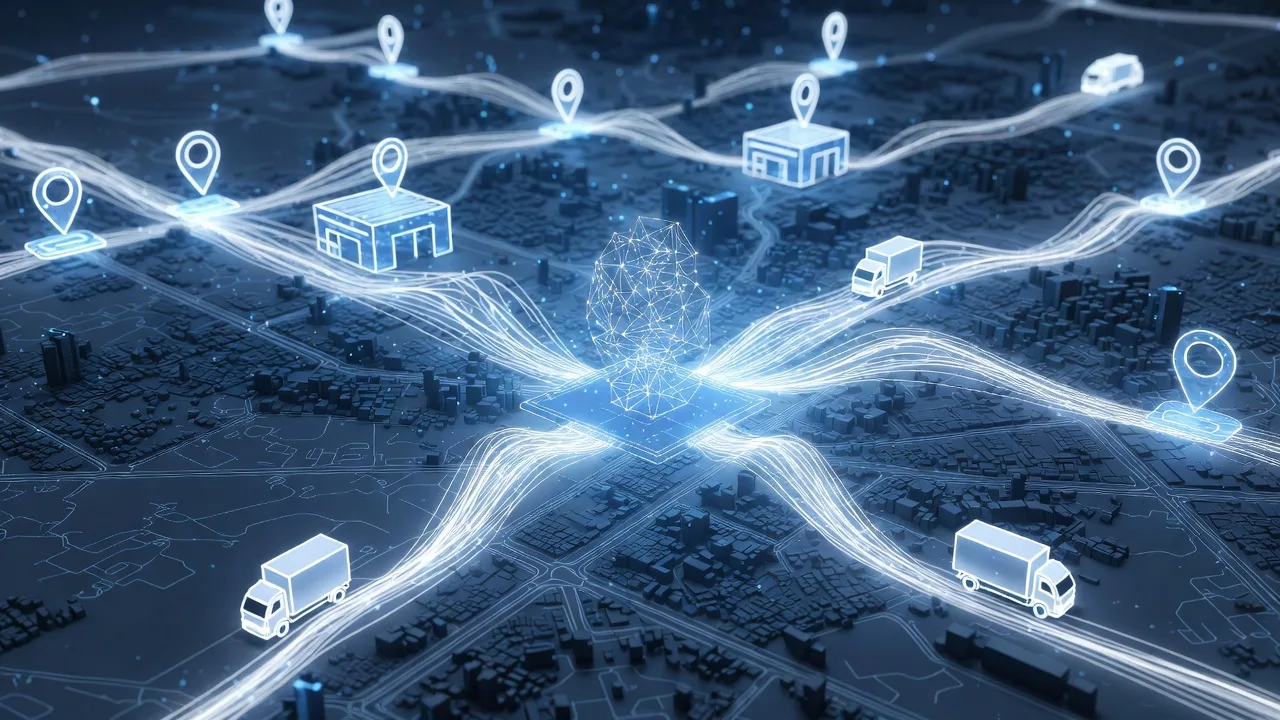

Supply Chain Management Software Modules: Driving Enterprise Logistics with AI Agents

Supply chain management (SCM) has evolved from a simple operational necessity into a strategic driver for enterprise success. With increasing global complexity in logistics, transportation, and inventory management, businesses require software that not only tracks goods but also predicts, analyzes, and automates decisions. AI-powered SCM software is no longer a futuristic concept; it is the engine behind modern, efficient, and resilient supply chains.

For enterprises seeking to implement AI-driven solutions, understanding the core software modules of SCM systems and how AI agents can enhance each is critical. This article breaks down these modules, highlights their AI potential, and provides practical insights for logistics and transportation decision-makers.

1. Overview of Supply Chain Management Software

SCM software integrates processes, people, and data across the supply chain, creating visibility and enabling smarter decision-making. Enterprise SCM solutions typically include modules for planning, execution, monitoring, and analytics. For logistics and transportation, the adoption of AI agents amplifies capabilities such as predictive routing, real-time exception handling, and autonomous task assignment.

| Key Feature | AI Enhancement | Enterprise Benefit |

|---|---|---|

| Inventory Visibility | AI predicts stock-outs and excess inventory | Reduced carrying costs, improved fulfillment |

| Transportation Management | AI agents optimize routes, schedules, and fleet utilization | Lower fuel costs, faster delivery |

| Demand Forecasting | Machine learning predicts demand trends | Better production planning and procurement |

| Supplier Collaboration | AI monitors supplier reliability and risk | Minimized disruptions and procurement delays |

| Warehouse Management | AI-driven robots and task scheduling | Increased throughput, reduced errors |

2. Core SCM Modules for AI-Powered Logistics

2.1 Demand Planning and Forecasting

Demand planning is the backbone of any supply chain. AI algorithms analyze historical sales, market trends, weather data, and external events to forecast demand with higher accuracy. AI agents can automatically adjust procurement schedules, notify purchasing teams, and trigger alerts for anomalies.

Key AI Functions in Demand Planning:

- Predictive analytics for SKU-level forecasting

- Automated scenario simulation (e.g., demand surge during holidays)

- Continuous learning from market and logistics data

| Traditional vs AI-Driven Forecasting | Benefits of AI |

|---|---|

| Manual Excel-based models | Faster, more accurate predictions |

| Historical trend extrapolation | Dynamic adjustment to real-world changes |

| Reactive adjustments | Proactive alerts and autonomous actions |

2.2 Inventory Management

Inventory management ensures optimal stock levels while preventing overstocking or shortages. AI agents track inventory in real time across warehouses and stores, flagging discrepancies and predicting replenishment needs.

AI Capabilities:

- Smart reorder point calculation

- Real-time inventory tracking using IoT sensors

- AI alerts for damaged or misplaced goods

| Module | AI Impact |

|---|---|

| Stock Tracking | Predicts out-of-stock events before they occur |

| Warehouse Optimization | Suggests storage layout for efficiency |

| Returns Management | Analyzes returns patterns to reduce losses |

2.3 Transportation Management System (TMS)

Transportation is a major cost center in logistics. AI agents within TMS modules can optimize routes, balance workloads across drivers, and dynamically reroute shipments based on real-time traffic or weather conditions.

AI Applications in TMS:

- Predictive route planning for cost and time savings

- Load consolidation to maximize fleet utilization

- Real-time exception handling for delays or disruptions

| TMS Feature | AI Enhancement | Enterprise Outcome |

|---|---|---|

| Route Optimization | AI predicts fastest and least-cost routes | Reduced delivery time and fuel cost |

| Carrier Selection | Evaluates performance, reliability, and cost | Improved service levels |

| Shipment Tracking | Real-time monitoring with predictive ETAs | Higher customer satisfaction |

2.4 Supplier and Procurement Management

Procurement involves sourcing materials from suppliers and ensuring timely delivery. AI agents assess supplier performance, predict potential disruptions, and recommend alternative sourcing strategies.

AI in Supplier Management:

- Risk scoring based on historical performance and external factors

- Automatic alerts for delayed shipments or compliance issues

- Predictive spend analysis to optimize procurement budgets

| Supplier Module | AI Function | Enterprise Benefit |

|---|---|---|

| Supplier Scorecards | Automated performance scoring | Improved supplier reliability |

| Contract Management | AI suggests negotiation strategies | Cost savings and compliance |

| Risk Management | Predicts disruption probability | Resilient supply chain |

2.5 Warehouse Management System (WMS)

Warehouses are operational hubs where AI agents can significantly improve efficiency. From task assignment to robotics integration, AI in WMS reduces manual effort and errors.

WMS AI Applications:

- Dynamic slotting for faster picking and packing

- Predictive labor allocation for peak periods

- Autonomous guided vehicles (AGVs) for material handling

| WMS Feature | AI Benefit |

|---|---|

| Inventory Slotting | Optimized storage for speed and space |

| Order Picking | AI prioritizes picking sequence to reduce travel time |

| Labor Scheduling | Predicts workforce needs to prevent bottlenecks |

2.6 Analytics and Reporting

Data is the most valuable asset in modern supply chains. AI agents in analytics modules process complex datasets, providing actionable insights and predictive intelligence.

AI Analytics Capabilities:

- Predictive performance dashboards for logistics KPIs

- Anomaly detection in operations and procurement

- Automated reporting tailored to management or operational needs

| Analytics Module | AI Enhancement | Benefit |

|---|---|---|

| Operational KPIs | Predictive trends and deviations | Proactive issue resolution |

| Cost Analysis | AI-driven cost simulations | Informed budgeting decisions |

| Customer Service | Predictive delivery estimates | Improved satisfaction and retention |

3. Integrating AI Agents Across SCM Modules

AI agents are not isolated, they function across modules to create a connected, intelligent supply chain ecosystem. Examples include:

- An AI agent that detects an incoming shipment delay and automatically reroutes trucks, notifies the warehouse, and updates the ERP system.

- Agents that continuously optimize inventory levels based on real-time sales and procurement data.

- Multi-agent systems collaborating to simulate “what-if” scenarios across logistics, supplier, and warehouse operations.

| Integration Example | AI Interaction | Result |

|---|---|---|

| Delayed Shipment | TMS agent triggers warehouse and procurement agent | Minimizes impact on delivery deadlines |

| Demand Spike | Forecasting agent informs inventory and procurement agents | Prevents stock-outs |

| Multi-Warehouse Coordination | WMS agents exchange data | Optimized resource allocation |

4. Benefits of AI-Powered SCM for Enterprises

For enterprise buyers, investing in AI-powered SCM software is no longer optional—it is a strategic necessity. Key benefits include:

- Operational Efficiency: Reduced manual work, better resource utilization, faster decision-making

- Cost Reduction: Lower transportation, labor, and inventory costs

- Resilience: Predictive insights to handle disruptions and supply chain risks

- Customer Satisfaction: Accurate delivery estimates, fewer stock-outs, and enhanced service levels

- Scalability: AI systems adapt to increased complexity and scale of operations

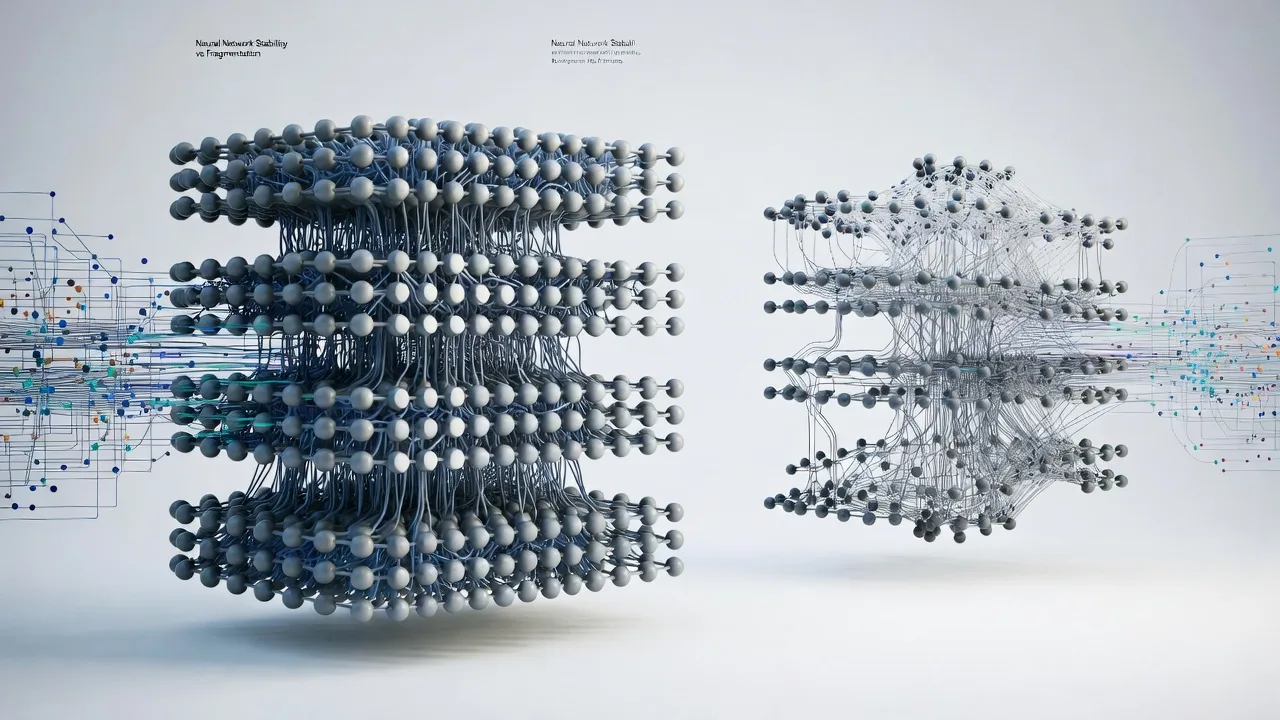

5. Implementation Considerations

When deploying AI-enhanced SCM software:

- Data Quality: Ensure accurate, real-time data from all touchpoints. AI agents are only as good as the data they process.

- Modular Adoption: Start with high-impact modules such as TMS or inventory management before scaling enterprise-wide.

- Integration: Ensure AI modules integrate with ERP, CRM, and other enterprise systems.

- Continuous Learning: AI models must evolve with new patterns in demand, logistics disruptions, and supplier performance.

- Change Management: Train employees to work alongside AI agents for maximum operational synergy.

People Also Ask

Key modules include Demand Planning, Inventory Management, Transportation Management (TMS), Supplier and Procurement Management, Warehouse Management (WMS), and Analytics & Reporting. AI agents enhance each module with predictive, autonomous, and real-time decision-making capabilities.

AI agents optimize routing, predict delays, manage fleet utilization, and automatically reroute shipments based on real-time conditions, reducing costs and improving delivery accuracy.

Yes. AI agents analyze historical sales, procurement data, and market trends to predict demand, automate reorder points, and flag inventory discrepancies across warehouses.

ROI comes from reduced operational costs, fewer stock-outs, improved supplier performance, faster delivery times, and enhanced customer satisfaction. Enterprise-level automation also scales efficiency as business grows.

Integration complexity depends on the legacy systems. Modular adoption, API-based connections, and phased deployment help enterprises minimize disruptions while maximizing AI benefits.