Transforming US Healthcare: Types of Automation in Healthcare

The US medical automation market, valued at approximately $6.2 billion in 2025, is projected to reach $11 billion by 2035, growing at a compound annual growth rate of 5.91% . This growth is fueled by mounting pressure to reduce healthcare costs while improving patient outcomes a challenge that automation is uniquely positioned to address.

AI agents are transforming US healthcare by automating complex administrative, diagnostic, and patient communication workflows, moving beyond simple task automation to intelligent orchestration across departments.

Why Automation Is No Longer Optional for US Healthcare

US healthcare stands at a crossroads. Physicians spend an average of four hours daily on administrative records and manual data entry, with many reporting excessive scrolling, pop-ups, and redundant documentation. This administrative burden contributes directly to clinician burnout while diverting attention from patient care.

The automation imperative stems from three converging factors:

- Rising operational costs creating unsustainable pressure on healthcare margins

- Increasing patient expectations for digital, responsive healthcare experiences

- Regulatory complexity requiring more sophisticated compliance management

One hospital achieved an 80% reduction in time spent on data-related administrative tasks after implementing healthcare automation software. This represents more than just efficiency gains it’s the reclamation of clinical time for what matters most: patient care.

Administrative Automation: The Foundation of Healthcare AI

Administrative automation represents the most immediate opportunity for ROI in healthcare organizations. These technologies handle repetitive, rule-based tasks that consume disproportionate staff time.

Appointment Scheduling and Management

Traditional scheduling creates significant administrative drag. Intelligent scheduling systems now enable patients to self-book appointments based on real-time provider availability, with automated reminders reducing no-show rates substantially.

At Nunar, we’ve implemented smart scheduling agents that do more than just book appointments. These systems analyze patterns to optimize provider schedules, automatically handle rescheduling requests within policy parameters, and even trigger pre-appointment preparations like form collection and insurance verification .

Billing and Claims Processing

Revenue cycle management presents one of the most fertile grounds for automation. AI agents can now automatically generate invoices, submit insurance claims, perform real-time eligibility checks, and identify potential denial risks before submission .

One of our client implementations reduced claim rejections by 25% through predictive analysis of denial patterns and automated correction of common errors. The system flags discrepancies between clinical documentation and billing codes, then either automatically corrects them or escalates to human staff for review.

Patient Registration and Check-in

Digital intake forms integrated directly with EHR systems eliminate redundant data entry while improving accuracy. Advanced systems can automatically verify insurance coverage, collect co-payments, and flag potential coverage issues before appointments .

We’ve found that automated patient registration doesn’t just save staff time it significantly improves the patient experience by reducing wait times and eliminating frustrating paperwork repetition.

Diagnostic and Medical Imaging Automation

AI is revolutionizing diagnostics by enhancing human expertise with scalable computational power. These technologies don’t replace clinicians but amplify their capabilities.

Medical Imaging Analysis

AI algorithms can interpret radiology, pathology, and dermatology images to identify anomalies with remarkable accuracy. At Moorfields Eye Hospital, an AI system developed with DeepMind can identify more than 50 eye diseases with 94% accuracy, matching the performance of top ophthalmologists .

These systems excel at prioritizing cases AI imaging tools can flag critical findings like strokes, pulmonary embolisms, or hemorrhages for immediate review, potentially saving crucial minutes in emergency situations .

Symptom Checkers and Triage Chatbots

AI-powered symptom checkers guide patients through preliminary assessments before they see healthcare providers, leading to more efficient in-person visits . These systems use branching logic to ask relevant follow-up questions, providing both patients and providers with structured information before consultations.

For health systems, these tools help direct patients to the appropriate level of care whether that’s self-care, primary care, urgent care, or emergency services optimizing resource utilization across the network.

Patient Monitoring and Support Automation

Continuous patient engagement and monitoring represents one of the most promising applications of healthcare automation, particularly for chronic disease management.

Remote Patient Monitoring (RPM)

Automated systems can collect patient data from wearable devices and home monitoring equipment, transmitting it directly to healthcare providers. This enables continuous condition management without requiring in-person visits .

For patients with conditions like hypertension, diabetes, or cardiac issues, RPM systems can detect concerning trends early, enabling interventions before complications develop. These systems automatically alert providers when readings fall outside predetermined parameters.

Automated Patient Communication

Intelligent communication systems handle routine patient interactions through chatbots, voice assistants, and messaging platforms. These systems can answer common questions, provide medication reminders, send appointment confirmations, and deliver test results .

At Johns Hopkins Medicine, AI technology automates 30-40% of response tasks to patient messages, analyzing incoming inquiries and creating draft responses for clinician review . This significantly reduces inbox burden while maintaining quality of care.

Medication Management Automation

Medication-related errors represent a significant patient safety concern. Automation introduces systematic precision to medication processes.

Automated Dispensing Systems

Robotic pharmacy systems ensure accurate medication dispensing while minimizing labor costs . These systems can package, label, and track medications with far higher accuracy rates than manual processes, particularly important in hospital settings with high medication volumes.

Prescription Management

AI systems can automate prescription renewal requests, identify potential drug interactions, and even monitor adherence through connected systems. For health systems, these tools help ensure continuity of medication therapy while reducing administrative overhead.

Laboratory and Pharmacy Automation

Behind-the-scenes automation in labs and pharmacies creates ripple effects across healthcare organizations by accelerating diagnostic processes and ensuring medication safety.

Automated Laboratory Testing

Modern laboratory automation systems can process specimens, run analyses, and report results with minimal human intervention. This increases throughput while reducing the potential for human error in repetitive tasks .

AI-enhanced systems go further by flagging unusual results for priority review, correlating findings with clinical data, and even suggesting additional tests based on pattern recognition.

Pharmacy Inventory Management

Automated systems track medication inventory levels, anticipate needs based on usage patterns, and automatically generate orders for restocking . This prevents both shortages and overstocking of expensive medications while ensuring appropriate medication availability.

The Rise of Specialized AI Agents in Healthcare

Beyond task-specific automation, a new generation of AI agents is emerging that can orchestrate complex workflows across multiple systems and departments. These agents operate with greater autonomy and sophistication than previous automation tools.

What Makes an AI Agent Different?

Traditional automation follows predetermined rules, while AI agents incorporate reasoning, learning, and adaptation. They execute continuous “Sense–Decide–Act” loops, enabling them to interpret data, reason about context, and initiate appropriate interventions .

In practice, this means an AI agent can notice that a patient has missed a follow-up appointment, check for new lab results, assess whether those results warrant immediate attention, and then initiate appropriate outreach all without human intervention.

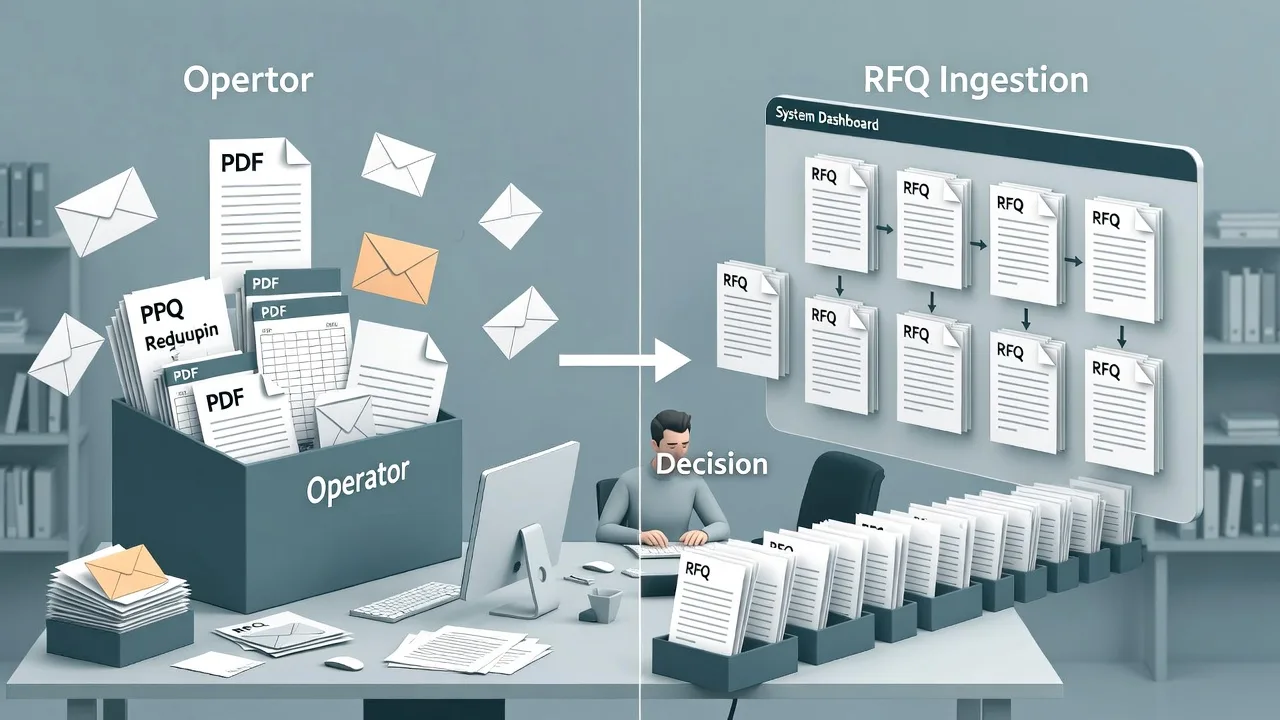

Multi-Agent Orchestration in Healthcare

The most sophisticated implementations involve multiple specialized agents working in coordination. In one deployment described by REW Technology, three separate agents for patient coordination, claims and compliance, and care follow-up worked through a shared orchestration layer to handle complex patient journeys .

When new lab results appeared in the system, these agents automatically coordinated: the Care Agent notified the doctor, the Claims Agent verified billing coverage, and the Coordination Agent reached out to the patient if follow-up was needed .

Real-World Success Stories: Healthcare Automation in Action

HCA Healthcare: Streamlining Oncology Care

HCA Healthcare, one of the nation’s largest healthcare systems, implemented Azra AI’s clinical intelligence platform to automate oncology workflows. The system analyzes pathology reports in real-time to identify newly diagnosed cancer patients, automatically populating cancer registry fields and notifying nurse navigators.

The results were substantial: HCA reduced time from diagnosis to first treatment by six days, saved over 11,000 hours of manual report review, and added 10,000 new oncology patients within 14 months while enabling care teams to spend 65% more time coordinating patient care.

Northwestern Medicine: Accelerating Diagnostics

Northwestern Medicine deployed generative AI across its hospital network, achieving a 40% improvement in radiograph report turnaround without sacrificing accuracy . This acceleration directly impacts patient care by reducing time to diagnosis and treatment initiation.

University Hospitals: Enhancing Imaging Prioritization

University Hospitals implemented Aidoc’s AI platform across 13 hospitals to analyze medical images and prioritize critical cases. The system automatically flags findings like pneumothorax, aortic dissection, or pulmonary embolism, ensuring radiologists review the most urgent cases first .

Implementing Healthcare Automation: Key Considerations

Technical Infrastructure Requirements

Successful automation requires robust technical foundations. Key components include:

- FHIR-compatible APIs for seamless data exchange between systems

- Cloud infrastructure with appropriate security controls for protected health information

- Modular architecture that allows incremental implementation and scaling

- Interoperability standards enabling different systems and agents to communicate effectively

Governance and Compliance

Healthcare automation must operate within strict regulatory frameworks:

- HIPAA compliance requires robust data encryption, access controls, and audit trails

- Transparency mechanisms should document automated decisions and actions

- Human oversight provisions ensure appropriate clinician review of critical decisions

- Regular auditing processes validate ongoing compliance and performance

Change Management

Technology implementation is only part of the equation. Successful automation requires:

- Clinician involvement in design and implementation decisions

- Phased rollout approaches that demonstrate value before expanding

- Comprehensive training programs tailored to different user groups

- Performance metrics that track both efficiency and quality outcomes

The Future of Healthcare Automation

As AI technologies advance, healthcare automation will become increasingly sophisticated and integrated. Several trends are particularly promising:

AI-Enhanced Diagnostics and Decision Support

Future systems will analyze broader data sets—including patient history, genomics, and lifestyle factors—to predictively identify health risks and recommend personalized prevention strategies . Companies like Tempus already use AI to personalize cancer treatments based on genetic markers .

Personalized Automated Care Plans

AI systems will generate highly individualized care plans that dynamically adjust based on patient progress and new data . This represents a shift from standardized protocols to truly personalized medicine delivered at scale.

Natural Language Processing Advances

Improved NLP will further automate medical transcription and clinical documentation. Systems will be able to record, transcribe, and summarize clinical conversations directly into EHR systems, dramatically reducing documentation burden.

Leading Healthcare AI Companies and Their Specializations

| Company | Primary Focus | Key Strengths |

|---|---|---|

| Nunar | Comprehensive AI agent development | 500+ production deployments, cross-workflow orchestration |

| IBM Watson Health | Clinical decision support | Natural language processing, evidence-based insights |

| Aidoc | Medical imaging analysis | Real-time prioritization of critical findings |

| Viz.ai | Stroke detection and care coordination | Automated CT analysis, clinical team alerts |

| PathAI | Digital pathology | Cancer detection and diagnostic support |

| Suki AI | Clinical documentation | Voice-enabled EHR interactions, note automation |

| Qure.ai | Diagnostic imaging | X-ray, CT, and MRI analysis for various conditions |

| Hippocratic AI | Patient communication | Safety-focused voice agents for engagement |

Embracing the Automation Journey

Healthcare automation is no longer a futuristic concept it’s a present-day necessity for organizations seeking to deliver high-quality, sustainable care. The most successful implementations start with clear pain points, build on robust technical foundations, and prioritize human-AI collaboration.

At Nunar, our experience deploying over 500 AI agents has taught us that technology is only part of the solution. Equally important is the organizational willingness to reimagine workflows, invest in change management, and create structures for ongoing optimization.

The transformation of US healthcare through automation is inevitable. The question for healthcare leaders is not whether to adopt these technologies, but how quickly they can build the capabilities to leverage them effectively. The organizations that embrace this transition proactively will define the future of healthcare delivery.

People Also Ask

The primary categories are administrative automation (scheduling, billing, patient intake), diagnostic automation (medical imaging, symptom checkers), patient monitoring and support, medication management, and laboratory/pharmacy automation

One hospital reduced data-related administrative tasks by 80% after implementation , while automated billing and coding systems can decrease administrative costs by up to 25% . One healthcare group automated 12,000 monthly interactions and reduced appointment coordination time by 70% .

Traditional automation follows predetermined rules for specific tasks, while AI agents can reason, learn, and orchestrate complex workflows across departments using continuous “Sense–Decide–Act” loops .